For two decades, the deployment pipeline was the bottleneck. Now it is the breach point.

AI coding tools have made code generation almost free. But enterprise Quality Assurance was built for a world where humans typed every line and senior engineers reviewed every pull request. That part of the business ended in 2025. The first quarter of 2026 is the receipts arriving.

Amazon is the most public casualty. The pattern is industry wide. The data is no longer ambiguous.

Here are 11 data points that show how AI code outran its quality controls, and what United States e-commerce leaders can do about it.

1. Amazon Lost 6.3 Million Orders in a Single Outage

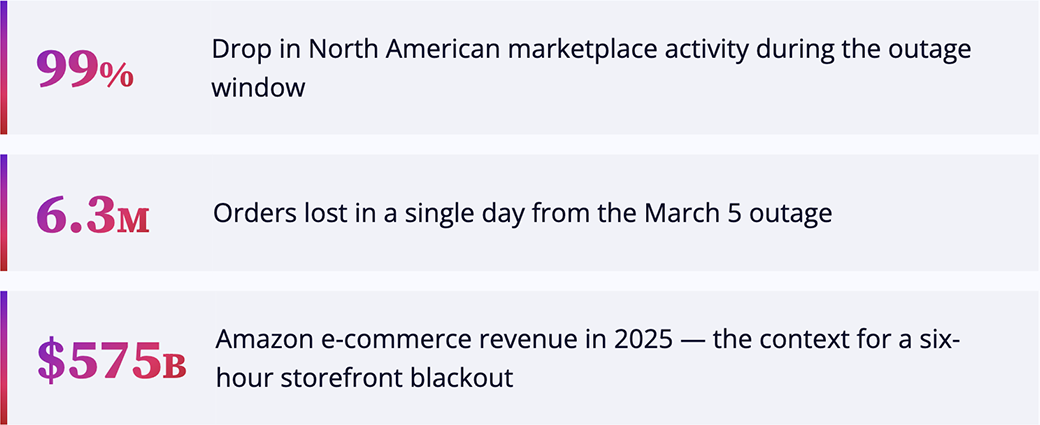

On March 5, 2026, Amazon.com went dark across North America for about six hours. Checkout broke. Login failed. Product pricing disappeared.

Business Insider obtained internal documents tying the disruption to AI-assisted code changes deployed without proper review. The financial impact was historic:

- A reported 99% drop in North American marketplace activity during the outage window

- An estimated 6.3 million lost orders in a single day

- A separate March 2 incident caused 120,000 abandoned orders and 1.6 million website errors from incorrect delivery times

For context, Amazon's e-commerce operation generated roughly $575 billion in 2025. A six-hour storefront blackout is no longer a hypothetical risk for United States retailers. It is a published case study.[1], [2] & [3]

2. The Response: 90-Day Reset, 335 Systems

The fallout was swift and structural. Amazon launched a 90-day code safety reset spanning 335 critical retail systems.

The new rules are basic, which is what makes them notable. Engineers must obtain two reviewers before deployment. AI-assisted code from junior and mid-level engineers requires senior engineer sign-off. Stricter automated checks are now mandatory.

The internal briefing memo describes a "trend of incidents with high blast radius caused by Gen-AI-assisted changes for which best practices and safeguards are not yet fully established."

This is what a Fortune 5 company looks like when it admits, in writing, that it shipped AI-generated code into production faster than its quality controls could absorb. The number every Chief Technology Officer should worry about is not 6.3 million, but 335.[4] & [5]

3. 43% of AI Code Needs Production Debugging

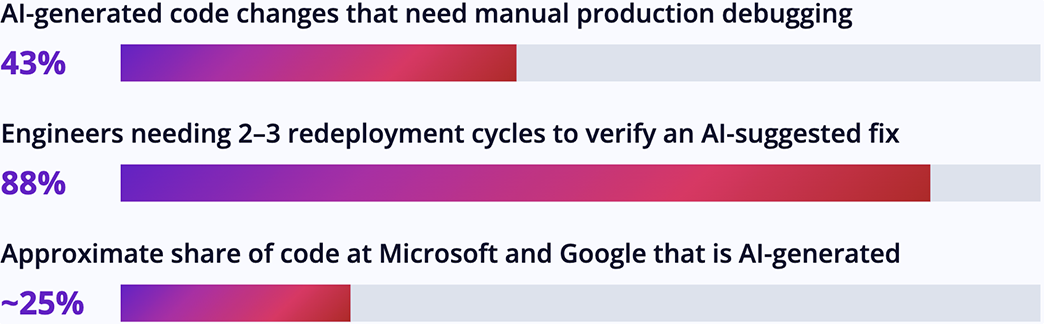

The Lightrun 2026 State of AI-Powered Engineering Report surveyed 200 senior Site Reliability Engineering (SRE) and Development Operations (DevOps) leaders at enterprises with 1,500 or more employees across the United States, United Kingdom, and European Union. Fielded January to February 2026, the findings expose the structural gap:

- 43% of AI-generated code changes need manual debugging in production, even after passing Quality Assurance and staging tests

- Not a single organization can verify an AI-suggested fix with just one redeploy cycle

- 88% need 2 to 3 redeploy cycles to verify an AI-suggested fix; 11% need 4 to 6 cycles

- Both Microsoft and Google Chief Executive Officers have stated that roughly 25% of their company code is AI-generated

AI is producing code at unprecedented speed. The systems built to validate it have not kept pace. [6] & [7]

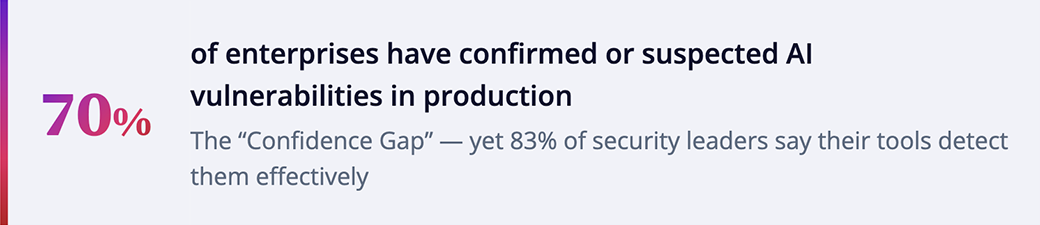

4. 70% Have AI Vulnerabilities in Production Already

The most uncomfortable data point of 2026 is the gap between what security tools think they catch and what they actually catch.

The Purple Book Community's State of AI Risk Management 2026 surveyed 650 senior cybersecurity decision-makers across seven industries. 70% reported confirmed or suspected AI-generated security vulnerabilities in production. Yet 83% of security leaders said their existing tools "effectively detect" them.

The report calls this the "Confidence Gap." It widens in the details:

- 92% of organizations with confirmed AI vulnerabilities in production still say their tools work, meaning detection is happening after deployment, not in the pipeline

- 73% of security leaders agree AI coding tools have accelerated development enough to make security keep-up impossible

- 59% confirm or suspect shadow AI is present in their organization, even though 90% claim full visibility into AI usage

- ProjectDiscovery's 2026 AI Coding Impact Report (April 22) found 66% of security teams now spend more than half their week manually validating AI-generated findings rather than fixing them

For a United States retailer, that is the security validation backlog growing faster than the team can drain it. [8] & [9]

5. Developers Have Lost Faith in AI Tools

The data is most damning when it comes from the engineers themselves. Stack Overflow's February 2026 analysis of developer trust telemetry shows the line collapsing while adoption climbs:

- Only 29% trust the accuracy of AI output, down 11 percentage points from 2024

- 66% of developers say AI gives "almost right but not quite" answers, the number-one frustration cited

- 45% cite debugging AI-generated code as a top frustration because it is more time-consuming

Google's 2025 DORA report puts the same gap differently. 90% of software developers use AI for coding, but only 24% express "a great deal" or "a lot" of trust in AI output.[10], [11] & [12]

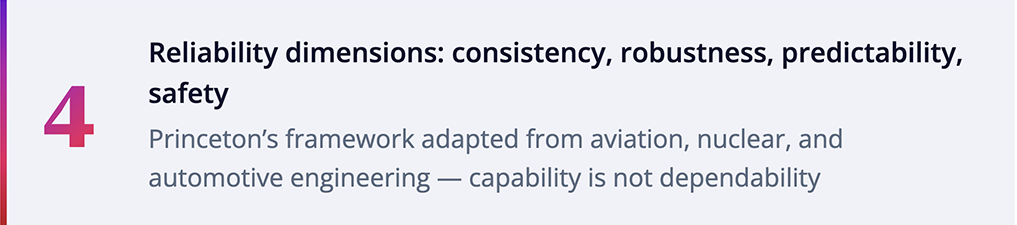

6. Princeton: Reliability is Not Keeping Up with Capability

If you only read one piece of academic work on AI in 2026, it should be the Princeton paper "Towards a Science of AI Agent Reliability" (preprint dated February 24, 2026).

Researchers Stephan Rabanser, Peter Kirgis, and four co-authors evaluated 14 frontier models across two benchmarks. Their headline finding: capability and reliability are not the same metric, and the gap is not closing.

Despite 18 months of accuracy improvements across model releases, "reliability only shows modest overall improvement." The paper finds that outcome consistency remains low across all models tested, including current frontier ones. That means even the best AI models often fail to solve the same task the same way when given the same input twice.

The Princeton authors propose four reliability dimensions adapted from safety-critical engineering disciplines (aviation, nuclear power, automotive systems, and industrial process control): consistency, robustness, predictability, and safety. None is captured by accuracy benchmarks alone.

For a Chief Technology Officer at a United States retailer, the implication is direct. Building capable AI is not the same as building dependable AI.[13] & [14]

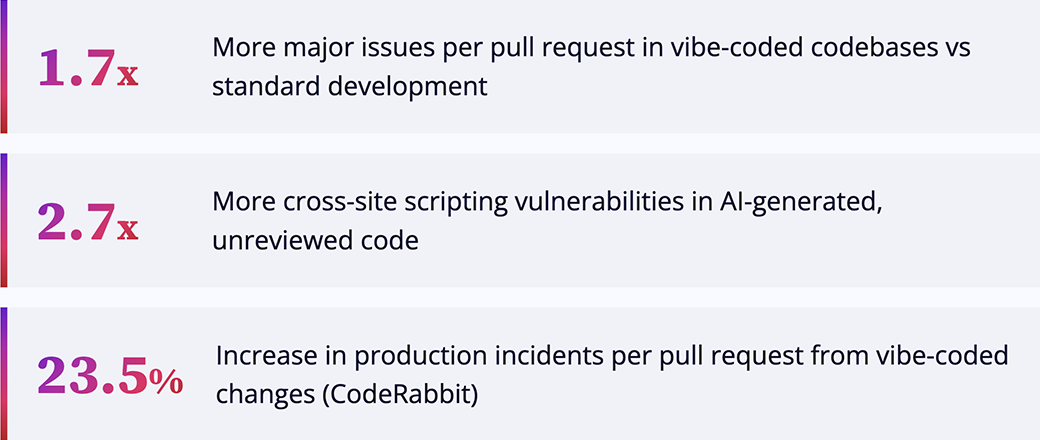

7. The Hard Cost: 23.5% More Production Incidents

The economics are now measurable. CodeRabbit's December 2025 analysis measured "vibe coding" pull requests (code generated by AI and shipped without proper review) against standard development practice across enterprise codebases:

- 1.7x more major issues per pull request

- Up to 2.7x more cross-site scripting vulnerabilities (a common attack where malicious code is injected into trusted websites)

- 23.5% increase in production incidents per pull request

An ICSE 2026 meta-analysis across 101 academic sources found that vibe-coded applications, software generated from prompts and shipped without structured Quality Assurance, accumulate technical debt at roughly 3x the rate of traditionally developed software.

IBM's 2025 Cost of a Data Breach report pegs the average breach at $4.88 million. When AI-generated security flaws meet AI-generated production volume, the math gets ugly fast for any consumer-facing site.[15] & [16]

8. The Industry Just Pooled $12.5 Million to Respond

On March 17, 2026, the Linux Foundation announced a $12.5 million initiative, backed by Anthropic, Amazon Web Services, GitHub, Google, Google DeepMind, Microsoft, and OpenAI. The funding goes to the Alpha-Omega Project and the Open Source Security Foundation, and is aimed at helping open source maintainers handle the flood of AI-generated security findings overwhelming their projects.

The pressure on maintainers is real and measurable. NIST's National Vulnerability Database now has more than 30,000 vulnerabilities (CVEs) backlogged, with submissions growing 263% from 2020 to 2025 as AI accelerates discovery faster than human teams can triage.

This is a coordinated industry response. The largest cloud providers and model providers, companies whose entire business depends on AI coding tools succeeding, have pooled funding to handle the consequences of their own products. The signal is clear. AI-generated code is not going away, and existing security tools cannot keep up unaided.[17] & [18]

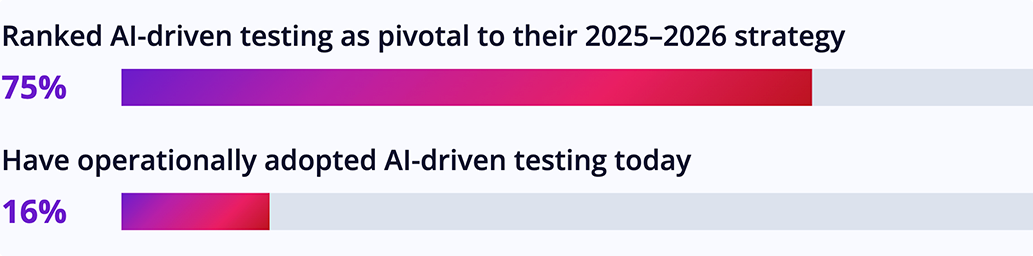

9. The Adoption Gap: 75% Want AI Testing, Only 16% Have it

Most enterprises know the answer to AI-generated code is AI-driven testing. They have not built it yet.

According to the 2025 State of Continuous Testing Report (Perforce industry survey), 75% of organizations ranked AI-driven testing as a pivotal component of their 2025-2026 strategy. Only 16% have operationally adopted it. That 59-point gap is where the Amazon-style failures happen.

The bottleneck is not tooling. It is orchestration. Most Quality Assurance stacks at United States retailers were built for slower, sequential release cycles, not for AI outputs that can change between runs. The validation discipline Princeton's framework demands (consistency, robustness, predictability, safety) is not yet wired into the build-and-release pipeline at most enterprises.

The platform vendors have moved fast. The retailers using them have not.[19] & [20]

10. The Quality Engineering Vendor Market Has Already Pivoted

The vendor landscape moved fast in early 2026. The signal of where the market is heading came on March 10, 2026, when one of our partners, Tricentis launched its Agentic Quality Engineering Platform. It ties together test automation, test management, performance testing, and quality intelligence under one orchestration layer, with built-in governance and human review gates.

Other vendors are moving the same direction. Mabl, Testsigma, ACCELQ, Applitools, and Katalon all have agentic testing capabilities in market today. Forrester and Gartner both published their first-ever rankings for AI-Augmented Software Testing in 2026.

Kevin Thompson, Tricentis Chief Executive Officer, framed the bet directly. "Enterprises demand speed, but they cannot afford to introduce risk through unsecure or low-quality AI-generated code."

For United States e-commerce leaders, the 2026 question is no longer whether to deploy AI-driven Quality Engineering. It is which platform combination fits the existing tech stack, and how fast the integration can be done without disrupting peak-season releases.[21] & [22]

11. The Next Crisis: AI-Generated Test Slop

While the industry races to build AI-driven testing platforms, a second risk is forming. The same dynamic that produces AI-generated code slop will produce AI-generated test slop. Tests that compile, run, and pass, but do not actually validate meaningful behavior.

Build-and-release pipelines (also known as Continuous Integration and Continuous Deployment) can enforce coverage thresholds. They cannot ensure tests validate the right thing. Teams will discover the gaps in production, not before.

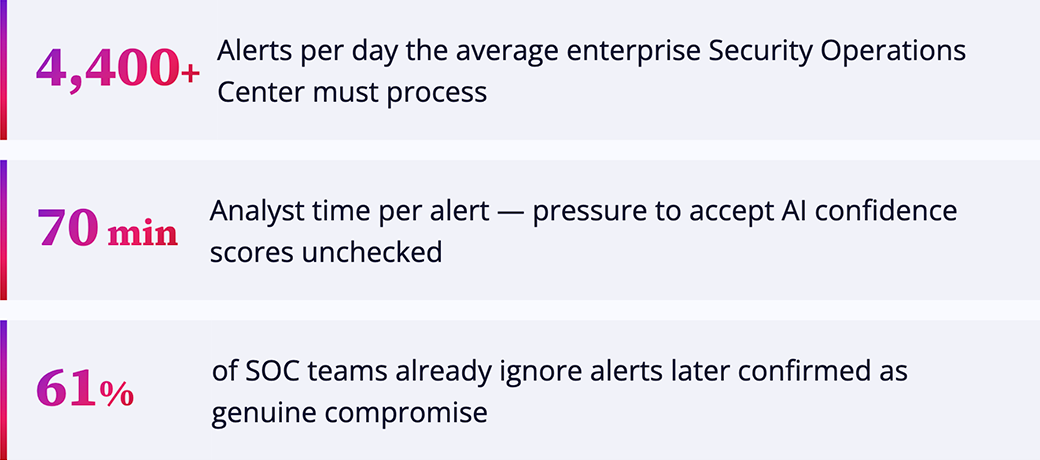

The pattern from security operations is the cautionary tale. The average enterprise Security Operations Center receives 4,400 plus alerts per day, with analysts allocated 70 minutes per investigation. When AI surfaces a classification with a confidence score, a junior analyst under time pressure accepts it. 61% of Security Operations teams already report ignoring alerts later confirmed as genuine compromise.

The Quality Engineering equivalent is already arriving. AI-generated tests rubber-stamped by AI-generated test reviewers, with no human in the loop until an outage forces one. The defense is not more automation. It is confidence engineering: evaluating test agents for coverage relevance, edge case discovery, and failure-mode realism, not just pass rates. [23]

GSPANN's Take: How United States E-commerce Wins This

The real lesson from Amazon is not that AI coding tools are dangerous. It is that velocity without verification is a temporary advantage and a permanent liability.

For United States retailers, the stakes are higher than for almost any other industry. Holiday peak traffic. Multi-channel inventory. Payment compliance. Customer trust that does not survive a six-hour outage. The 90-day reset Amazon imposed across 335 systems is the cost of pretending the verification layer was already there.

GSPANN has spent two decades helping United States e-commerce leaders build verification layers that scale with their tech velocity. The path forward is well-mapped, even if it is unglamorous:

- Treat AI-generated code as Draft Zero. Every line is a hypothesis until validated. Senior engineer review is a feature, not a bottleneck.

- Adopt AI-driven Quality Engineering in parallel with AI coding. Orchestrate Agentic AI-powered automation frameworks that match the accelerated validation needs of AI-powered development cycles.

- Embed the four Princeton reliability dimensions into release readiness: consistency, robustness, predictability, and safety. Make them gating criteria, not afterthoughts.

- Make security testing first-class in the build-and-release pipeline, not a post-deployment scan. The 70% production-vulnerability rate is the cost of treating security as an afterthought.

- Audit the test suite itself, not just the production code. AI-generated tests are the next slop frontier.

GSPANN's Quality Engineering practice combines partnerships with leading testing platforms, proprietary accelerators for intelligent test orchestration and self-healing scripts, and deep delivery experience across United States retail, manufacturing, and high-tech. We help our clients ship AI-era code at AI-era velocity, with the verification discipline that keeps them out of the headlines.

The companies winning in 2026 are not the ones moving fastest with AI. They are the ones whose verification layer scales as fast as their generation layer. That is the architecture decision that defines the next 18 months.[24]

All References:

Ref 1: https://lightrun.com/blog/the-amazon-outage-warning-ai-agent-blind/

Ref 2: https://vibegraveyard.ai/story/amazon-ai-code-retail-outages/

Ref 5: https://creati.ai/ai-news/2026-03-13/amazon-90-day-code-safety-reset-ai-agent-retail-outages-2026/

Ref 7: https://lightrun.com/blog/the-amazon-outage-warning-ai-agent-blind/

Ref 8: https://techinformed.com/seven-in-10-firms-see-ai-code-flaws-in-production/

Ref 10: https://stackoverflow.blog/2026/02/18/closing-the-developer-ai-trust-gap/

Ref 12: https://cybernews.com/ai-news/amazon-aws-disrupted-ai-coding-tool-kiro/

Ref 13: https://arxiv.org/html/2602.16666v1

Ref 16: https://hatchworks.com/blog/gendd/cost-of-vibe-coding/

Ref 17: https://www.linuxfoundation.org/press/linux-foundations-alpha-omega-project-awards-12-5-million

Ref 19: https://www.testdevlab.com/blog/ai-augmented-software-testing-future-of-qa

Ref 21: https://www.tricentis.com/news/tricentis-introduces-agentic-software-quality-platform

Ref 22: https://pctechmag.com/2026/04/best-ai-agents-for-software-testing-in-2026/

Ref 24: https://www.gspann.com/services/it-services/quality-engineering-and-assurance/